This means only one collectd instance with the docker-collectd-plugin is needed to point at the Swarm manager’s API endpoint. If you’re using Docker Swarm, the Swarm API endpoint exposes the full Docker remote API, reporting data for all the containers executed in the swarm. Simply configure the docker-collectd-plugin to talk to the local Docker daemon on each host: The simplest and most reliable way of getting metrics from all your containers is running collectd on each host that has a Docker daemon. For more information, check out our introductory blog post on Monitoring Docker at Scale with Splunk Infrastructure Monitoring. The best way to collect those metrics and send them to your monitoring system is to use collectd and the docker-collectd-plugin. Whether or not you plan on collecting application-level metrics, you should definitely get your containers’ metrics first. The Docker daemon exposes very detailed metrics about CPU, memory, network, and I/O usage that are available for each running container via the /stats endpoint of Docker’s remote API. If you need system-level metrics from your containers, Docker has you covered. Do you run lightweight, barebone, single-process Docker containers or heavyweight images with supervisord (or something similar)?.If you have application-specific metrics, do you poll those metrics from your application, or are they being pushed to some external endpoint? If you poll the metrics, are they available through a TCP port you’re comfortable exposing from your container?.Is your application placement static or dynamic? (i.e., Do you use a static mapping of what runs where or do you use dynamic container placement, scheduling, and binpacking?).Do you want to track application-specific metrics or just system-level metrics?.Your answers may differ for each application and your approach to monitoring should reflect those differences.

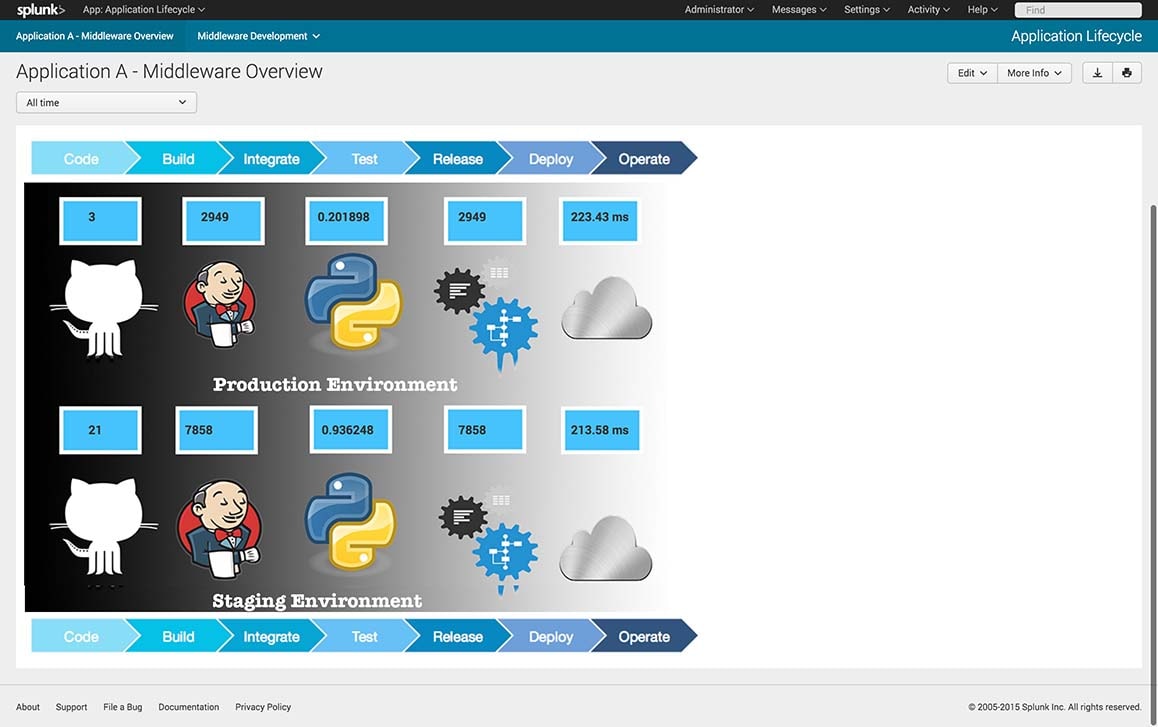

What level of observability can you can get from your Dockerized application?īetter yet, to understand the changes a microservices regime and Dockerized environment might cause for your monitoring strategy, you should first answer these four simple questions.What Docker image philosophy do you follow?.What orchestration technology do you use?.The answer, as is so often the case, is “it depends.” It depends on the dependencies of your environment and is affected by your use case and objectives: The question we should be asking then becomes: “How does Docker change how I monitor my applications?” Running your applications in Docker containers only really changes how they are packaged, scheduled, and orchestrated-not how they run. It needs to be done, and there are solutions for each of these. Monitoring the Docker daemon, the Kubernetes master, or even the Mesos scheduler isn’t complicated. Asking the Right QuestionĮven as IT, operations, and engineering orgs come together around the value of and objectives for containers, one question endures: “How do I monitor Docker in my production environment?” The source of confusion here comes from the fact that we’re asking the wrong question. In this post, I’ll discuss what’s important to monitoring Dockerized environments, how to collect container metrics you care about, and your options for collecting application metrics. This is the first in a series of blogs on monitoring Docker containers. Along the way, we’ve learned how to monitor our Docker-based infrastructure and how to get maximum visibility into our applications, wherever and however they run. Every single application we manage executes within a Docker container. Splunk Infrastructure Monitoring has been running Docker containers in production since 2013. However, operationalizing Docker can also mean more complexity, an abundance of infrastructure and application data, and greater need for monitoring and alerting on the production environment.

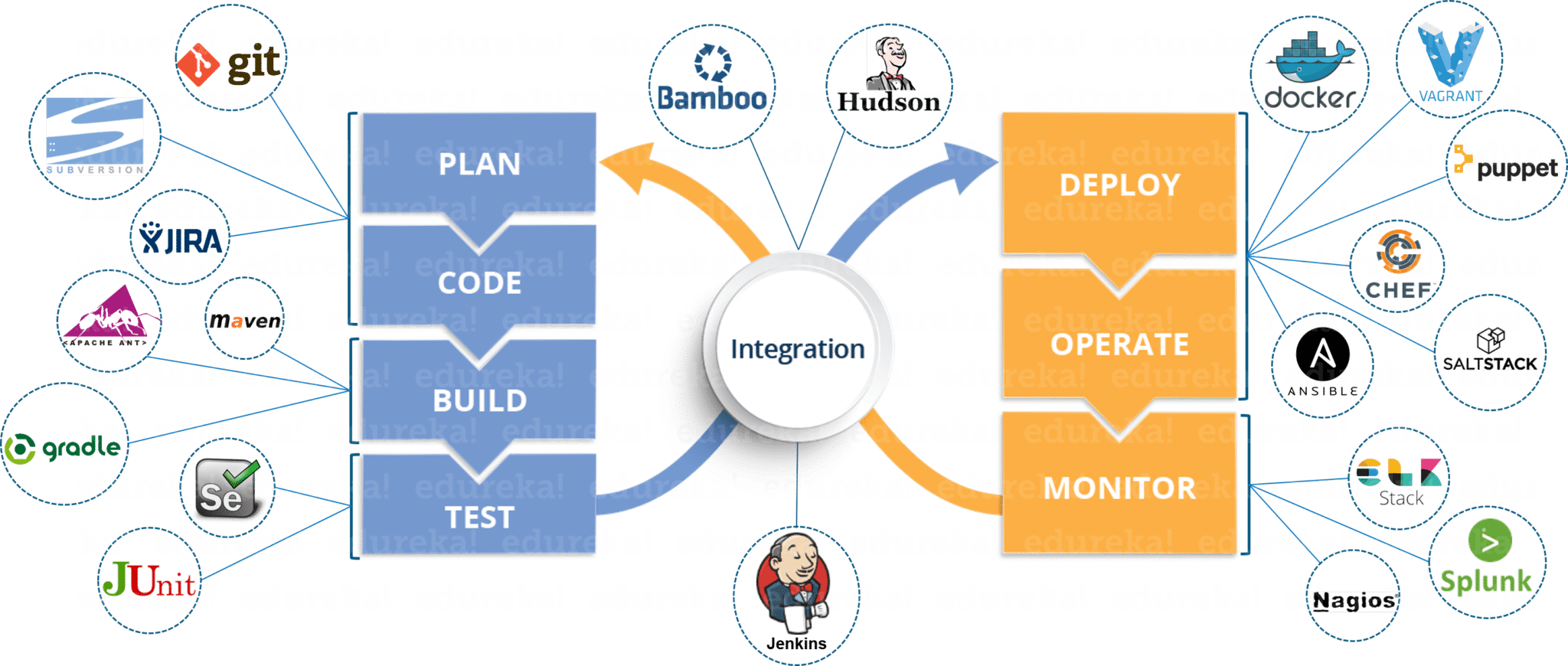

The Docker movement gives product teams more freedom in their technology choices since they’re empowered to deploy and manage their applications in production themselves. Containers ready for cloud architecture brought production operations closer to development and helped make microservices the backbone of a more flexible, aggressive approach to building software architecture. Docker shook the DevOps world a couple of years ago.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed